A.I Agents

/

The Agentic Value Chain: How Google Is Closing the Loop From Intent to Execution

Disco, Gemini Deep Research, and Google’s December Core Update collectively reveal how AI agents are reshaping the web—not as a place to browse, but as a system designed to translate intent directly into execution. Taken together, these developments mark a decisive shift away from retrieval-based experiences toward agentic workflows that plan, reason, and act on behalf of the user.

/

AUTHOR

Team Logicfox

The Agentic Pivot

For over two decades, Google’s value proposition was straightforward: help users find information.

December 2025 marks the moment that changed.

Across a single week, Google rolled out a set of updates that—viewed individually—look incremental. Taken together, they reveal a deliberate pivot away from retrieval and toward delegation. This is not about better answers. It’s about executing intent.

The industry shorthand for this shift is agentic AI: systems that don’t just respond, but plan, reason, act, and adapt over time. OpenAI and other AI-native competitors have been pushing aggressively in this direction. Google’s response wasn’t to ship a single “agent” product.

Instead, it rebuilt the stack.

From interface, to reasoning engine, to distribution and quality control, Google is now closing the loop from intent → execution → feedback.

Layer One: Interface Agents (Disco and GenTabs)

The most visible change happens at the surface.

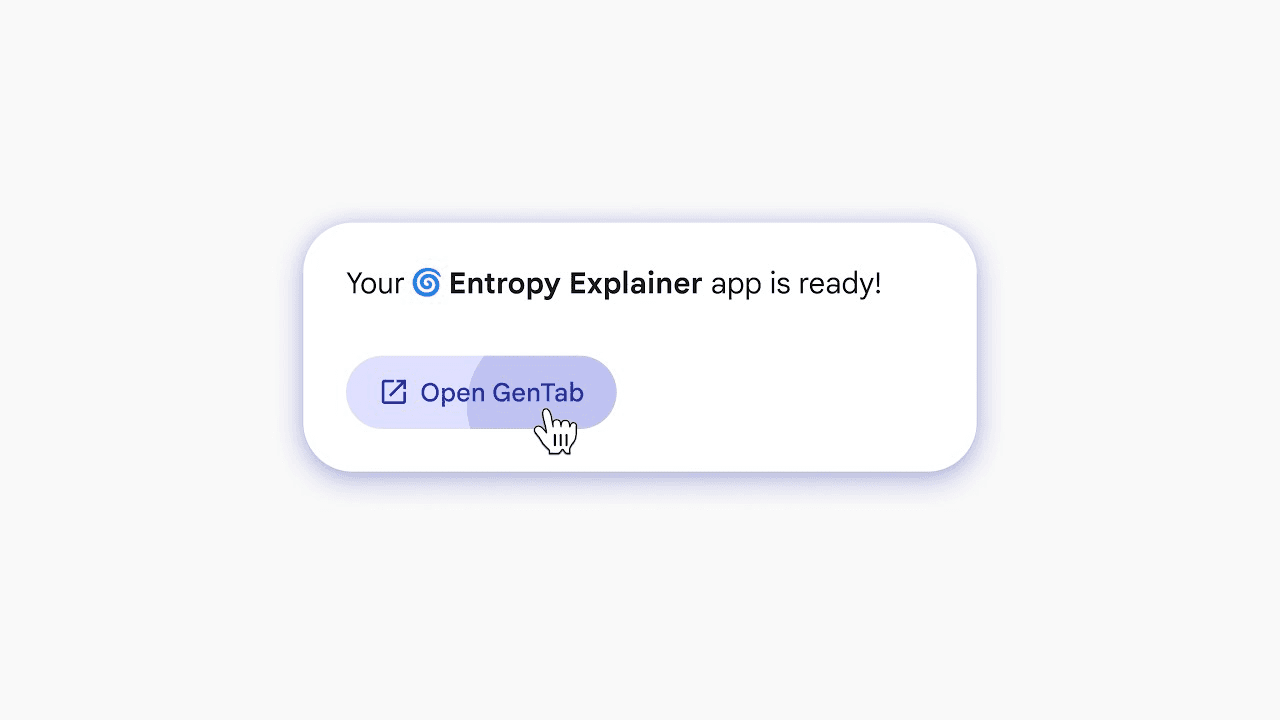

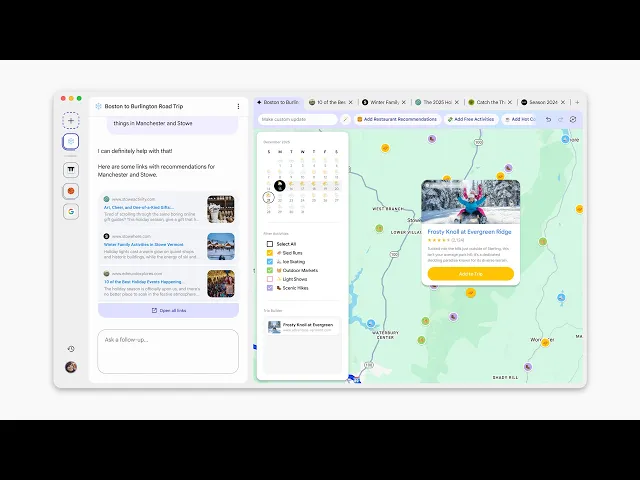

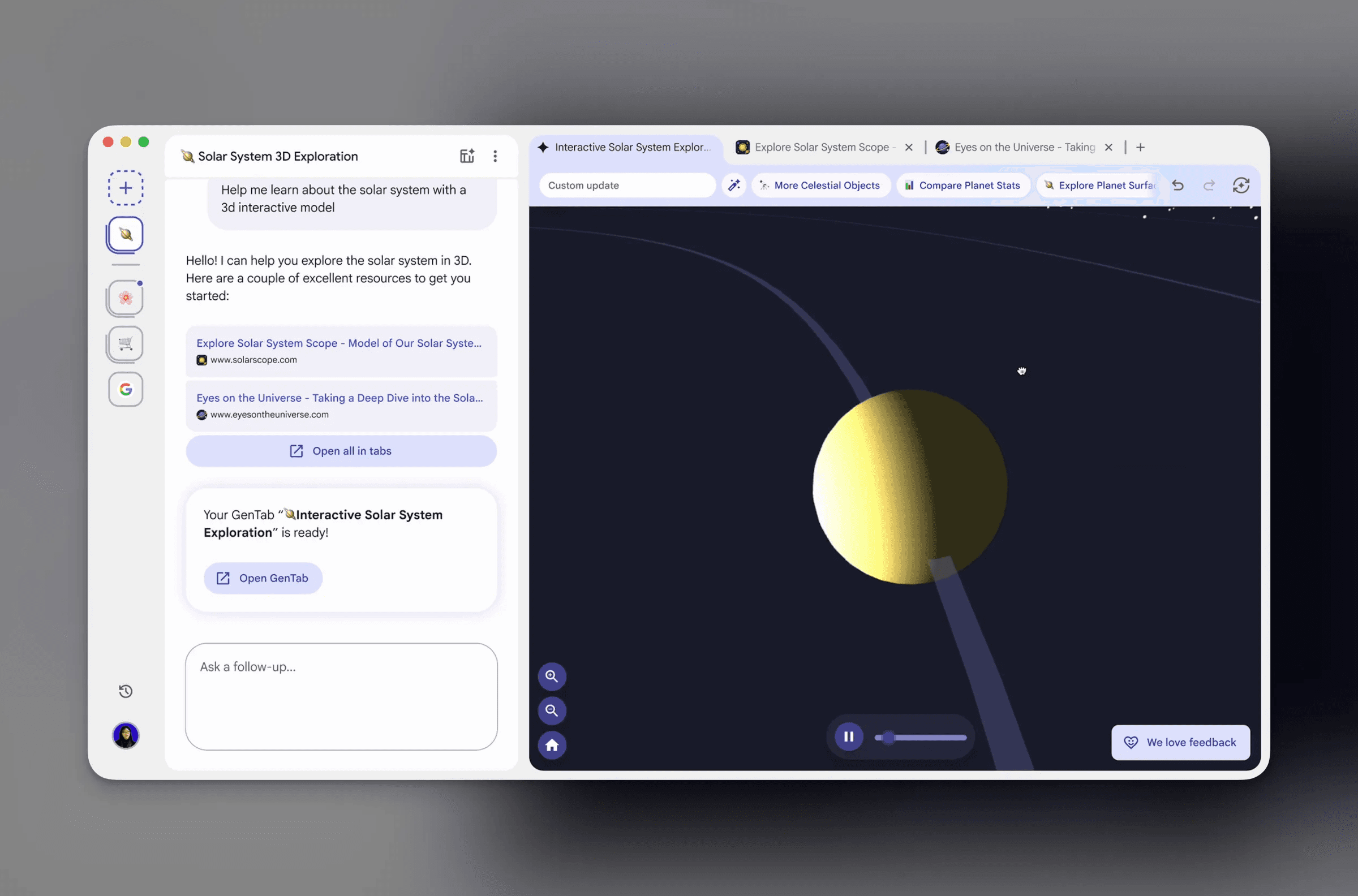

Project Disco, currently available as an experimental browser through Google Labs, reimagines what a browser is for. Rather than acting as a passive document renderer, Disco treats the browser as a runtime — a place where intent becomes tools rather than pages.

At the heart of Disco is GenTabs, a concept Google describes as dynamically generated, task-specific interfaces built directly inside the browser. Instead of opening a page, the browser constructs a working application based on what the user is trying to do.

In a traditional browser, a tab corresponds to a single URL. In Disco, a GenTab is a purpose-built tool. Ask the browser to plan a trip to Tokyo and instead of ten blue links, it generates a live trip planner that combines maps, calendars, reviews, and booking options into a single interactive interface.

This distinction matters.

Most AI-powered search products focus on summarisation. Disco focuses on operationalisation. The interface doesn’t just explain information — it turns information into something usable.

Independent coverage from The Verge and TechCrunch has highlighted how Disco’s GenTabs behave less like webpages and more like lightweight applications, effectively blurring the line between browsing and software use.

If interfaces can be generated contextually, per task, then static SaaS dashboards begin to look increasingly brittle. Disco feels less like a browser experiment and more like an early preview of a post-SaaS interaction model.

Layer Two: Reasoning Agents (Gemini Deep Research)

Interfaces alone don’t make agents useful. Execution requires reasoning through time.

This is where Gemini Deep Research comes in.

Unlike conversational models that respond instantly, Deep Research is designed for long-running, autonomous work. It decomposes complex tasks into sub-questions, performs iterative searches, evaluates gaps in its own understanding, and synthesises grounded outputs.

Google positions Deep Research as a system built for long-thinking workflows rather than reactive chat, enabling agents to reason across multiple steps and sources before producing a result. This capability is rooted in the broader Gemini reasoning architecture developed by Google DeepMind.

The difference is subtle but critical.

Most AI assistants are reactive. Gemini Deep Research is proactive. It behaves less like a chatbot and more like a junior analyst who knows when to keep digging, when to pivot, and when enough evidence has been gathered.

Google’s decision to expose this capability through the Gemini API and Interactions framework makes the intent clear. This is not a consumer novelty. It’s infrastructure for enterprise use cases such as due diligence, market analysis, legal research, and technical investigation.

The Economics of “Thinking”

The pricing model reinforces this positioning.

Gemini Deep Research introduces meaningful costs for output and grounding, making it clear this capability is aimed at high-value work, not everyday chat. Grounded reasoning carries a premium because accuracy, traceability, and citation matter — principles aligned with Google’s Responsible AI and grounding framework.

This pricing structure has a second-order effect that matters more than the numbers themselves.

Reasoning is no longer something you apply everywhere by default. It becomes something you allocate deliberately.

Chat models can be sprayed across low-value interactions with minimal downside. Long-running, grounded reasoning cannot. Organisations are forced to decide which decisions are worth real thinking, and which should remain fast, cheap, and approximate.

In practice, this creates a bifurcation: lightweight assistants handle ambient tasks, while reasoning agents are reserved for moments where mistakes carry real cost. That constraint is not a limitation — it’s a governance mechanism.

Execution without reasoning is automation.

Reasoning without execution is trivia.

Deep Research exists to bridge that gap.

Layer Three: Quality & Control (The December Core Search Update)

Agents are only as good as the data they consume.

That makes Google’s December 2025 Core Search Update more than an SEO event — it’s a defensive move to protect the entire system.

Throughout 2025, the web has been flooded with low-value, mass-produced AI content optimised for ranking rather than usefulness. Google’s stated goal is to surface “relevant, satisfying content,” but the subtext is clearer: AI slop is a threat to both users and model training.

Human experience, genuine expertise, and original insight are no longer soft signals. They are strategic assets.

Post-update analysis from Search Engine Journal and Search Engine Land showed extreme volatility across sectors like travel and affiliate content, reinforcing that Google is actively enforcing a human premium on the web.

Seen through this lens, the Core Update isn’t anti-AI. It’s pro-signal. Google is ensuring that agentic systems have high-quality material worth reasoning over.

Closing the Loop: From Intent to Execution

Zooming out, a clear architecture emerges:

Intent expressed in natural language

Interface agents translate intent into tools

Reasoning agents plan and execute across time

Search and ranking systems enforce quality and trust

Outputs feed back into training, UX, and distribution

This is a closed loop.

Search becomes less about discovery and more about orchestration. Users don’t browse — they delegate. Systems don’t just respond — they act.

This is the agentic value chain, and Google is positioning itself to own it end-to-end.

What This Means for Businesses

Most businesses are still asking the wrong questions.

They’re debating which chatbot to deploy or how to “add AI” to an existing product. Meanwhile, the ground is shifting underneath them.

A useful test is simple:

If an agent tried to complete your core workflow end-to-end tomorrow, where would it fail?

For most organisations, the failure point isn’t model capability. It’s ambiguity. Undefined handoffs. Exceptions that live in people’s heads. Decisions that rely on “how we usually do things” rather than explicit rules.

These gaps don’t matter when humans are in the loop by default. They become blocking issues the moment work is delegated.

In an agentic world:

AI chat is not a strategy

Workflows must be agent-compatible, not just automated

Interfaces will be generated per task, not designed once

Content must serve humans first, because agents depend on human signal

Execution, not information, becomes the competitive advantage

The winners won’t be the ones with the flashiest demos. They’ll be the ones whose operations can be cleanly delegated.

Where LogicFox Fits

At LogicFox, we don’t build novelty AI. We design systems that work in the real world.

That means:

Translating business operations into agent-ready workflows

Integrating reasoning agents where decisions actually happen

Ensuring grounding, quality, and governance are built in

Preparing organisations for agentic interfaces, not just APIs

The agentic shift isn’t coming.

It’s already here.

/

BLOG